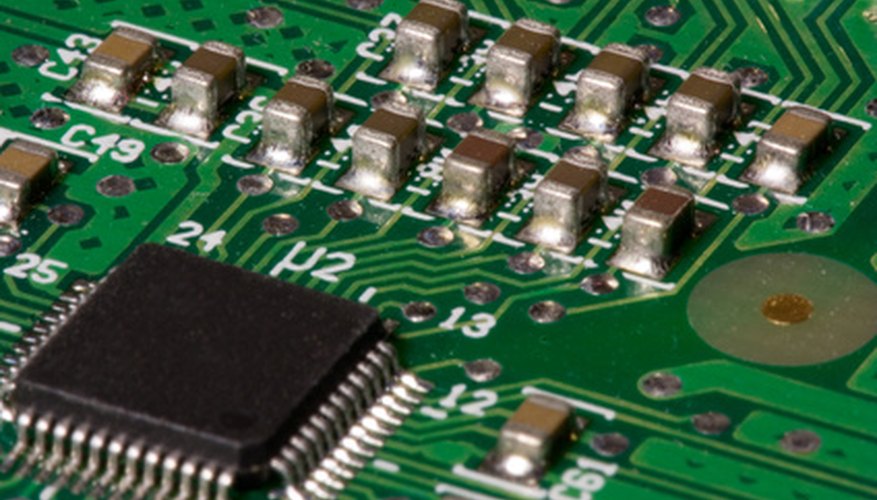

A Programmable Logic Controller (PLC) is type of computer designed specifically for industrial applications. A microprocessor is the Central Processing Unit (CPU) of a computer.

Differences

All PLCs contain one or more microprocessors, but not all microprocessors are used in PLCs. Desktop computers, appliances, automobiles and consumer electronics are all likely to contain one or more microprocessors.

- A Programmable Logic Controller (PLC) is type of computer designed specifically for industrial applications.

- All PLCs contain one or more microprocessors, but not all microprocessors are used in PLCs.

Features

A microprocessor is only one component of an electronic device and requires additional circuits, memory and firmware or software before it can function. A PLC is a complete computer with a microprocessor. A PLC can be programmed or reprogrammed to control different types of devices, using relatively simple programming languages such as Ladder Logic, which resembles a circuit diagram with switches, coils, relays and other electrical components, representing operators such as True/False, counters, timers and numeric computations.

History

PLCs were first introduced in the late 1960s, and were first used to control automobile assembly lines. The first PLCs resembled mainframe or mini computers. As microprocessor technology evolved, PLCs became much smaller and gained new capabilities and processing power.

Applications

PLCs are now used in virtually every industry. PLCs control devices in factories, power plants, oilfields and even vehicles. Microprocessors are the "brains" of almost every electronic device.

- PLCs were first introduced in the late 1960s, and were first used to control automobile assembly lines.

- Microprocessors are the "brains" of almost every electronic device.

Fun Fact

Before PLCs were invented, electrical circuits controlled industrial devices. PLCs are basically "virtual" electrical circuits that can be reconfigured with a few keystrokes. Microprocessors are fixed in their capacities.